There’s a new behavior quietly taking over coffee shops, airports, conferences, and probably the cubicle next to yours.

People are whispering into their phones.

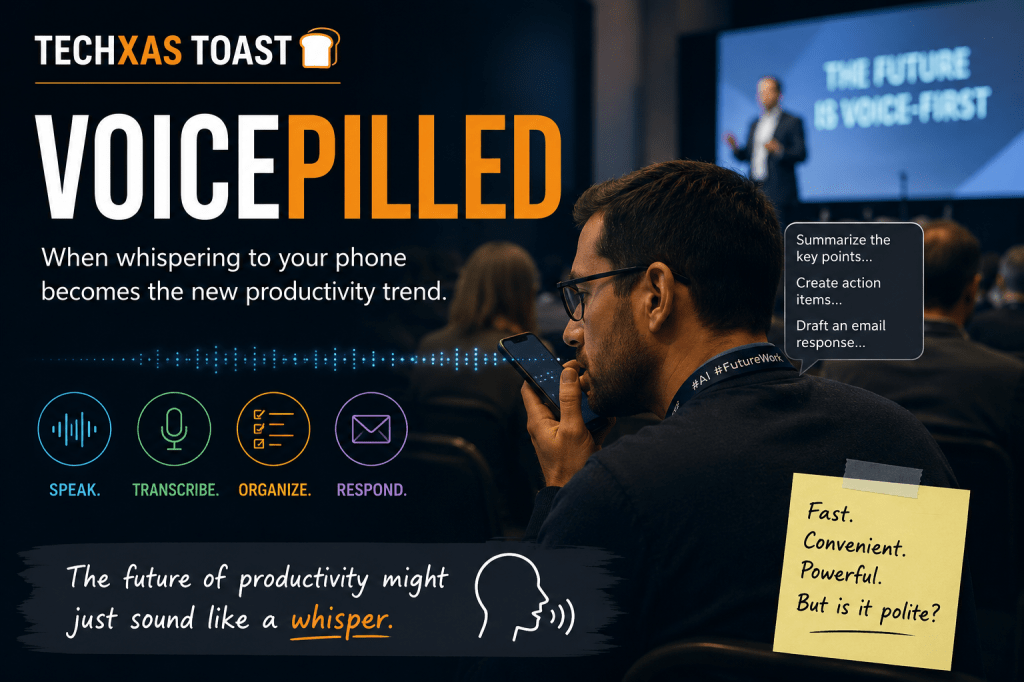

Not for phone calls. Not for voice messages to friends. But to take notes, draft emails, create reminders, summarize meetings, and interact with AI assistants. The trend is becoming so common that it almost deserves its own label:

“Voicepilled”

The term was declared last year by Reid Hoffman, co-founder of LinkedIn, Manas AI, and Inflection AI.

If you have not encountered it yet, you probably will soon. I recently experienced it firsthand at a conference. During a presentation, the person sitting next to me spent much of the session softly whispering into their phone, likely dictating notes into an AI app or voice assistant. At first, I thought they were quietly talking to someone. Then I realized this was their workflow.

And honestly? It was incredibly distracting.

The strange thing is… I also completely understood why they were doing it.

The Appeal of Voice-First Productivity

We are entering an era where typing increasingly feels optional. AI-powered transcription tools, voice assistants, and smart note-taking apps have become remarkably good. Instead of pulling out a laptop or pecking away on a tiny phone keyboard, people can now simply whisper:

- “Draft a response to that email.”

- “Summarize the speaker’s main points.”

- “Remind me to follow up next Tuesday.”

- “Create a task list from this meeting.”

The convenience is undeniable. Speaking is faster than typing for most people. It feels natural. Efficient. Frictionless.

In many ways, voice interaction may become the default interface for AI systems. We are already seeing it happen with tools like OpenAI’s ChatGPT voice mode, Google Assistant integrations, and increasingly sophisticated transcription apps.

Typing may eventually feel as outdated as manually entering phone numbers into a Rolodex.

The Social Problem Nobody Talks About

But here’s the issue: whispering is still noise.

And unlike typing, whispering creates a uniquely irritating kind of ambient distraction. It sits in that uncanny valley between silence and conversation. Your brain keeps trying to process it because it sounds like someone is speaking directly beside you.

At conferences, in classrooms, on airplanes, or in quiet public spaces, “voicepilled” behavior creates a new social tension:

- The speaker values efficiency.

- Everyone around them values silence.

Neither side is necessarily wrong.

We already went through similar growing pains with speakerphones, Bluetooth headsets, and Zoom meetings in public spaces. Society eventually developed etiquette around those behaviors. We may now need a similar etiquette for AI-driven voice workflows.

The Future Sounds Like Murmuring

What fascinates me most is that this trend feels like an early preview of a much larger shift. We are moving toward an environment where people constantly interact with AI in real time.

Not just occasionally.

Continuously.

Imagine offices where employees quietly dictate thoughts throughout the day. Students verbally brainstorming with AI tutors. Professionals walking through airports muttering responses to emails while their devices organize their schedules.

The future of computing may not look like people staring silently at screens.

It may sound like a room full of murmuring.

So… Is Being Voicepilled Good or Bad?

Probably both.

Voice-based AI interaction is incredibly useful. It lowers friction, increases accessibility, and lets people capture ideas instantly. For productivity, it is genuinely powerful.

But socially, we are still figuring it out.

Right now, being “voicepilled” can make someone look highly efficient—or deeply annoying—depending on who is sitting nearby.

And maybe that’s the real story here: technology changes faster than etiquette does.

The devices learned how to listen before we learned when to whisper.

Note: This blog post was written with the assistance of ChatGPT, an AI language model.

Leave a comment