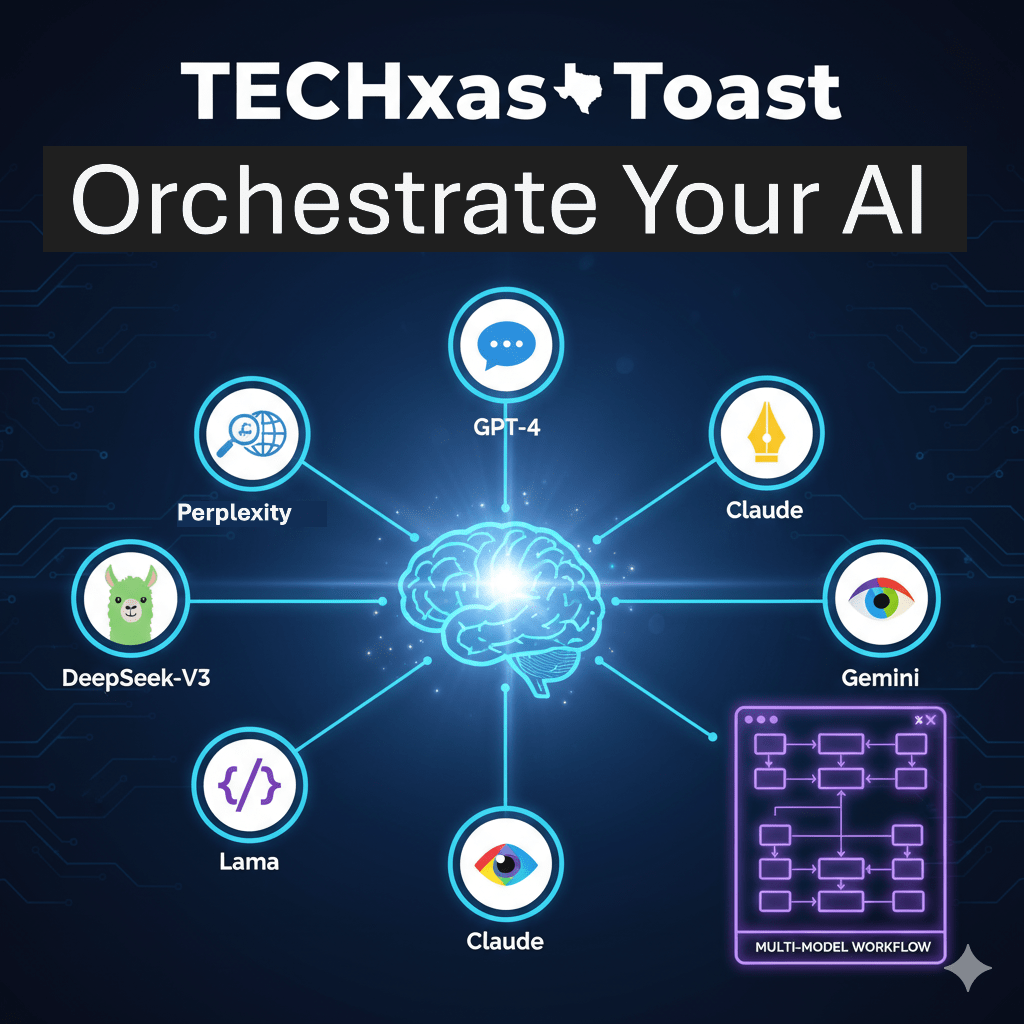

Howdy, tech enthusiasts! Welcome back to TECHxas Toast. Today, we’re diving into a topic that’s quickly becoming crucial for anyone serious about AI: multi-model workflows.

If you’re still thinking of AI as a single, all-knowing entity, it’s time to adjust your Stetson! The smartest way to harness AI isn’t to rely on one model for everything, but to strategically combine their unique strengths. Think of it like assembling a dream team for your digital tasks.

Ready to see how you can elevate your AI game from basic prompting to sophisticated orchestration? Let’s saddle up!

The AI All-Stars: Understanding Each Model’s Superpower

Just like you wouldn’t use a hammer for every construction task, you shouldn’t use a single AI model for every digital challenge. Each model (or model family) has its own set of talents:

- GPT-4 (and newer): The Analytical Workhorse. This is your go-to for complex reasoning, professional content, and when you need reliable accuracy across a broad range of general knowledge. It’s the sturdy truck that can haul almost anything.

- Claude (e.g., Opus, Sonnet): The Professional & Nuanced Wordsmith. When you need long-form content, strategic thinking, detailed attention to tone, or robust coding, Claude shines. It’s your seasoned copywriter and meticulous editor rolled into one.

- Gemini (e.g., Pro, Flash): The Data Powerhouse. Built for speed and handling massive amounts of data, Gemini excels at real-time visual and multimodal processing. It’s perfect for heavy data analysis, classification, and understanding content across text, images, and even audio/video.

- DeepSeek-V3: The Technical & Cost-Effective Specialist. For advanced mathematics, competitive coding challenges, or when you need high performance in specialized technical areas at a great price, DeepSeek-V3 is a dark horse contender.

- Llama (Open Source): The Friendly & Fast Communicator. If you need quick, conversational responses, chat applications, or a model that can be efficiently fine-tuned and run locally, Llama is your affable companion.

- Perplexity: The Citing Researcher. Unique in its ability to search the internet in real-time and provide cited sources, Perplexity is invaluable when you need current, verifiable information for research and content creation.

Beyond Single Prompts: Strategies for Multi-Model Mastery

The real magic happens when you start linking these models together. Think of these as blueprints for advanced AI applications:

- The Handoff Method (Chaining): Break a big problem into smaller steps. Have Model A handle Step 1, then pass its output to Model B for Step 2, and so on. This ensures each part is handled by the best tool for the job.

- Result: Superior accuracy and depth in your final output.

- Parallel Test (Voting): For critical tasks, run the same prompt through 2-3 different top-tier models. Compare their outputs.

- Result: Increased confidence, higher quality by selecting the best option, or even better, combining the strengths of each.

- Critique Loop (Refinement): Let one model create, then have another model critically review and refine the output. This is like having an expert editor on standby.

- Result: Polished, strategic, and virtually error-free final products.

- Pre-Processing Chain: Use a faster, cheaper model for initial data clean-up, classification, or extraction. Then, feed the refined data to a more powerful (and often more expensive) model for complex analysis.

- Result: Significant cost savings and cleaner data for the heavy-duty thinking.

Real-World Pairings: Putting it All Together

Here’s how these strategies translate into action:

- For Complex Research & Strategy: Start with Perplexity to gather and cite the latest data. Feed the raw information to Gemini for quantitative analysis and trend identification. Finally, give Claude the structured analysis to draft a professional, strategic executive summary.

- For Robust Code Generation & Review: Ask DeepSeek-V3 to draft the initial, technically complex code. Then, pass that code to GPT-4 for a thorough security review, error handling implementation, and detailed documentation.

- For Long Document Summarization: Use Gemini‘s massive context window to process a stack of documents, extracting key decisions. Then, hand those bullet points to Claude to weave into a coherent, narrative summary.

- For Dynamic Content Creation: Have Claude generate your high-quality, long-form blog post. Then, send the draft to a Llama model to quickly adapt headlines and intros for various social media platforms with a friendly, engaging tone.

The Bottom Line

In the rapidly evolving world of AI, mastery isn’t about finding the one best model, it’s about understanding the strengths of many models and orchestrating them into powerful workflows. By doing so, you’re not just using AI; you’re building a highly efficient, specialized team that can tackle virtually any challenge.

So go ahead, experiment, mix, and match! You’ll be amazed at the level of sophistication and quality you can achieve.

Note: Note: This blog post was written with the assistance of Gemini, an AI language model.

Leave a comment